Ethics and Regulation in Digital Marketing

Advanced Digital Marketing

Agenda

Ethics in Digital Marketing

Regulations

Platform Guidelines

Email Marketing, Ethics, and Regulation

Ethical Frameworks for Digital Marketing

Ethics in Digital Marketing

Uniqueness of Digital Marketing

- In digital marketing environment, individuals leave behind a trail of data as they interact with various digital platforms.

- Digital trail

- Digital footprint

- Marketers have access to unprecedented amount of data about consumers’ behaviors, preferences, and demographics at

individuallevel.- Demographic identifiers: name, age, gender, location, education

- Psychographic identifiers: interest, hobbies, lifestyle, values

- Webographics: browsing history, search queries, online purchases, social media activities

- This granular data can be used without consent, leading to ethical concerns regarding privacy, data security, and informed consent.

Dilemma

- Customer Empowerment: Shift in power from marketers to customers.

- Digital tools has lead to customer empowerment.

- Marketers adapt their marketing strategies towards customer-centric approaches.

- Case of Customer Empowerment

- Market information is easily accessible to customers

- Communication is both ways

- Customers can decide which channel to use to interact with firms

- Voice is consumer-space has gained prominence over brand-space

- Emergence of concepts like

prosumerandcustomer co-creation

A Dilemma

- Privacy vs. Personalization

- Data Security vs. Data Utility

- Scale/Reach vs. Digital Inclusion

- Transparency/Informed Consent vs. Frictionless Experience

- Engagement/Conversion Goals vs. Manipulative Practices

- Algorithmic Optimization vs. Fairness

The ethical challenges in digital marketing arise from the tension between leveraging data for business benefits and respecting consumer rights and well-being.

Ethical Issues: Data & Autonomy

- Privacy Concerns

- Collecting and using personal data without consent

- Invasive tracking and profiling of consumers

- Data Security

- Risk of data breaches and unauthorized access

- Inadequate protection of sensitive information

- Informed Consent

- Lack of transparency about data collection and usage

- Misleading or unclear privacy policies

- Manipulative Practices

- Exploiting consumer vulnerabilities through targeted advertising

- Using AI to create deceptive content or experiences

Ethical Issues: Fairness

- Algorithmic Bias

- AI systems perpetuating existing biases in data

- Discriminatory outcomes in targeting and personalization

- Digital Divide

- Unequal access to digital technologies and platforms

- Exclusion of certain demographics from digital marketing efforts

- Social Responsibility

- Balancing profit motives with ethical considerations

- Promoting positive social impact through digital marketing initiatives

- Long-term Consequences

- Considering the broader implications of digital marketing strategies

- Avoiding short-term gains at the expense of long-term ethical standards

Ethical Issues: Governance & Trust

- Regulatory Compliance

- Navigating complex and evolving digital marketing regulations

- Ensuring adherence to data protection laws (e.g., GDPR, CCPA)

- Intellectual Property

- Unauthorized use of copyrighted content

- Challenges in protecting digital assets

- Transparency and Accountability

- Lack of clarity about AI decision-making processes

- Difficulty in holding companies accountable for unethical practices

- Consumer Trust

- Erosion of trust due to unethical practices

- Importance of building and maintaining consumer confidence

Consequences

- Customer perceptions of “data vulnerability” (access, breach, spillover, misuse) reduce trust, increase negative WOM and switching; transparency and customer control mitigate harms.

- Marting et al. (2017)

- Data‑breach announcements lower customer spending and trigger channel migration; high‑patronage customers are more forgiving.

- Janakiraman et al. (2018)

- Personalization increases effectiveness only when consumers trust the firm and when targeting is not perceived as intrusive — perceived invasiveness can backfire (reduced response, negative attitudes).

- Bleier and Eisenbeiss (2015)

- Privacy regulation has both intended consumer-protection benefits and important unintended economic and distributional costs. Regulators and firms need to weigh trade-offs instead of assuming data restrictions are uniformly beneficial.

- Dubé et al. (2025)

Reflection

- Individual Task:

- Name one concrete benefit of data-driven personalization for consumers (example from readings or your own idea).

- Name one concrete ethical risk or unintended consequence (e.g., pick from privacy, exclusion, market concentration, or algorithmic bias).

- Propose one short, practical mitigation (company policy, regulatory tweak, or tech solution) and a one-line reason why it helps.

- In Pair:

- Share your answers with your partner.

- Discuss similarities and differences in your responses.

- Identify one common theme or insight that emerged from your discussion.

Hint: Personalization can help underserved niche buyers, but also note privacy rules may favor incumbents

Regulations

Key Regulations

- General Data Protection Regulation (GDPR)

- Enforced in the European Union (EU)

- California Consumer Privacy Act (CCPA)

- Enforced in California, USA

- ePrivacy Directive or Cookie Law (ePD)

- Enforced in the EU

- Privacy Act and Spam Act

- Enforced in Australia

- Personal Information Protection Law (PIPL), Algorithm Law, and The “Anti-Spam” Rules (MIIT Regulations)

- Enforced in China

- Data (Use and Access) Act (DUAA) and Privacy and Electronic Communications Regulations (PECR)

- Enforced in UK

- Digital Personal Data Protection Act (DPDP)

- Enforced in India

- Protecting Children Online

- Children’s Online Privacy Protection Act (US)

- Children’s Online Privacy Code (Australia)

GDPR

Consent Must Be Active & “Granular”

- Active Opt-in: Silence is not consent. Implement “I agree” button.

- Granularity: Separate consents for different activities. No use of data for email marketing and analytics.

- Easy withdrawal: Allow users to withdraw consent as easily as they gave it.

The “Right to Object” to Direct Marketing

Transparency & Privacy Policies

- No marketing jargons. Plain language.

- Clear information on data collection, usage, sharing, and retention.

Restrictions on Profiling

- Marketers must ensure they have a lawful basis (usually explicit consent) to track user behavior.

Data Minimization

- You can no longer collect data “just in case” it might be useful later.

- Data storage has shelf-life and must be deleted when no longer needed.

The Right to be Forgotten

- If a user asks you to delete their data, and you don’t have a legal requirement to keep it.

GDPR shifts the concept from “company ownership” to “user rights.” The user “owns” their data; the company is a custodian.

CCPA

If you use tracking pixels (Meta Pixel, Google Ads, TikTok) to retarget users on other websites based on their behavior on your site, this is legally “

sharing.” You must allow users toopt outof this, even if no money changes hands.You generally need a conspicuous link on your homepage (e.g., via a single link titled “Your Privacy Choices”) that says “

Do Not Sell or Share My Personal Information.”Global Privacy Control (GPC) is Mandatory: If a user has set a GPC signal in their browser, you must respect it and not sell or share their data.

Sensitive personal information contains users’ location and if the user has put restriction on its use, marketers can not use their precise location for ad targeting.

You cannot retarget or collect marketing data without transparency before the data is collected.

- On any lead generation form (e.g., email newsletter signup), you should link to your privacy policy close to the “Submit” button.

- You cannot collect emails for “Order Updates” and then pivot to using them for “Third-Party Partner Marketing” unless you disclosed that specific purpose at the time of collection.

ePrivacy Directive (Cookie Law)

This law is specifically designed for new digital technologies and ease the advance of electronic communications services.

You must obtain prior

informed consentbefore placing cookies or trackers (like the Meta Pixel, Google Analytics, or retargeting scripts) on a user’s device.You generally cannot send marketing emails or SMS texts to individuals (B2C) unless they have specifically

opted in(consented) to receive them.- The “Soft Opt-In” Exception: You can send marketing emails to existing customers without fresh consent if all three conditions are met:

- You obtained their email in the course of a sale (or negotiation for a sale).

- You are marketing your own similar products or services.

- You gave them a clear opportunity to opt-out (unsubscribe) when you first collected the email and in every subsequent message.

- The “Soft Opt-In” Exception: You can send marketing emails to existing customers without fresh consent if all three conditions are met:

The directive leaves it up to individual EU member states to decide rules for B2B marketing (emails to corporate addresses like info@company.com).

You generally cannot use precise location data (from the network) for marketing purposes without

explicit consentoranonymization.

Privacy Act and Spam Act

- Privacy Act

- Transparency: You must have a clearly expressed and up-to-date Privacy Policy.

- Direct Marketing:

- You generally cannot use personal information for direct marketing unless an exception applies (usually consent or reasonable expectation).

- It allows you to market to users if they would “reasonably expect” it (e.g., they bought a product from you, so they expect a newsletter about similar products), provided they can easily opt out.

- Spam Act

- Regulates commercial electronic messages (Email, SMS, MMS, Instant Messages).

- Compliance involves following three step rule:

- Consent: You must have the recipient’s consent (opt-in) to send commercial messages.

- Identification: The message must clearly identify the sender.

- Unsubscribe: You must provide a functional unsubscribe option in every message.

PIPL, Algorithm Law, MIIT

PIPL

- Separate Consent

- If you want to transfer a Chinese user’s email to your headquarters in the US or Australia (Cross-Border Transfer), you need Separate Consent.

- If you collect “Sensitive Personal Information” (e.g., financial info, precise location, or biometric ID), you need Separate Consent.

- Data Localization:

- If you process a large amount of data (thresholds vary, often >1 million users), you generally must store that data on servers inside mainland China.

Algorithm Law

- The “Turn Off” Switch: You are legally required to give users an option to turn off personalized recommendations.

- If a user clicks this switch, you must stop showing them targeted ads or algorithmic content feeds and show them generic/chronological content instead.

- No “Big Data Killing” (Price Discrimination): You are strictly forbidden from using algorithms to charge different prices to different users based on their data (e.g., charging an iPhone user more for a hotel room than an Android user)

MIIT Regulations

- SMS Marketing:

- Marketing SMS must usually include a signature in brackets, e.g., 【BrandName】, and distinct “Opt-Out” instructions (like “Reply to unsubscribe”).

- There are strict caps on how many messages you can send to a user per day/week.

- Email Marketing:

- The “Ad” Tag: Marketing emails generally require the word “Ad” (or Chinese equivalent) to be visible in the subject line.

- Consent: Strict opt-in is required

DUAA and PECR

- DUAA

- The “New” Cookie Rules: No need for consent for certain “low-risk” cookies.

- Analytics: Cookies used solely for statistical purposes to improve your service (e.g., basic Google Analytics).

- Functionality: Cookies that help the website work better (e.g., remembering a user’s font size or language preference).

- Security: Fraud prevention and security cookies.

- The “New” Cookie Rules: No need for consent for certain “low-risk” cookies.

- PECR

- You still generally need consent (Opt-In) to email private individuals unless you use the “Soft Opt-In.”

- The “Soft Opt-In”: You can email existing customers without a new checkbox if:

- You collected their details during a sale (or negotiation).

- You are marketing your own similar products.

- You gave them a chance to opt out then, and in every subsequent email.

DPDP

Consent: You need free, specific, informed, and unconditional consent.

You must offer your Privacy Notice and Consent requests in English and the 22 designated Indian languages (e.g., Hindi, Tamil, Bengali, Telugu).

The law created a new class of entities called

Consent Managers. These are centralized platforms (registered with the government) where users can manage all their consents in one place. Your marketing stack must eventually be able to “talk” to these Consent Managers to check if a user has revoked permission.

Protecting Children Online

- Children’s Online Privacy Protection Act (COPPA) (US)

- Parental Consent: Requires verifiable parental consent before collecting personal information from children under 13.

- Privacy Policy: Mandates a clear and comprehensive privacy policy detailing data collection practices.

- Data Security: Obligates operators to implement reasonable security measures to protect children’s data.

- Children’s Online Privacy Code (Australia)

- Age Verification: Requires age verification mechanisms to prevent children from accessing certain online services without parental consent.

- Parental Control: Encourages the implementation of parental control features to help parents manage their children’s online activities.

- Data Minimization: Emphasizes collecting only the necessary data from children and ensuring its secure handling.

- Children under 16 shouldn’t be allowed to use social media.

Reflection

Scenario

You are the Digital Marketing Lead based in the US for a new sneaker brand launching globally. You have proposed three specific marketing tactics.

Tactic A (The Tracker): “We will put a Meta Pixel on our homepage to retarget everyone who visits us, regardless of where they click.”

Tactic B (The Cold Email): “We bought a list of corporate email addresses (e.g., ceo@shoe-store.com). We will send them a cold sales pitch.”

Tactic C (The Data Lake): “We will store all our global customer data, including Chinese and Indian customers’ ID info, on a single AWS server in California.”

The Task: Based on the regulations we just covered, identify one region where this tactic is risky or illegal and explain why.

Hints:

- Think about EU (Cookie Law) vs. USA (CCPA).

- Think about UK vs. Germany (ePrivacy) vs. Australia.

- Think about China (PIPL) and India (DPDP).

Platform Guidelines

Major Platforms

Social Media Platforms

- Network-focused social media

- Media-focused social media

- Discussion-focused social media

- Review-focused social media

- Shopping-focused social media

E-commerce Platforms

Online Ad Platforms

The issues of ethics, data privacy, and data security are evolving and regulators are still catching up. Therefore, each platform has its own set of guidelines and policies that marketers must adhere to when using their services for digital marketing purposes.

Online Content

- Influencer-generated Content (IGC)

- Sponsored IGC

- Disclosed

- Undisclosed

- Non-sponsored IGC

- Advocacy

- User-generated Content (UGC)

- Sponsored IGC

- Reviews and Ratings

- Expert Reviews (e.g., critic ratings)

- User Reviews (e.g., customer ratings)

- Third-party Reviews (e.g., review aggregators)

Facebook/Instagram Guidelines

Branded Content: Content for which the creator has been compensated, either with money or something else of value, by a brand or business partner. This may include when products and services have been gifted for free.

- Creators must tag the brand or business partner when posting branded content

- Content that features an affiliate link should have the Paid partnership label

Meta Advertising Standards: Ads must adhere to Meta Advertising Standards.

Advertising policy basics checklist: A checklist to help you understand the basics of Facebook’s advertising policies.

Examples

X/Twitter

Advertisers on X are responsible for their X Ads. This means following all applicable laws and regulations, creating honest ads, and advertising safely and respectfully. This article describes our advertising policies. Our policies require you to follow the law, but they are not legal advice.

- Prohibited Content

- Deceptive and Fraudulent Content

- Hateful Content

- Prohibited Content for Minors

- Weapons and Weapon Accessories

- Restricted Content

- Adult Content

- Alcohol

- Drugs and Drug Paraphernalia

- Financial Products and Services

- Gambling and Games

- Healthcare and Pharmaceuticals

- Political Content

- Tobacco and Tobacco Accessories

- Paid Partnership Ad

- Posts that are part of a Paid Partnership published as an organic Post must include clear and conspicuous language indicating the commercial nature of such content, like “Ad” or “Promoted Content.”

TikTok

Sponsored Content: When posting content that promotes a brand, product, or service on TikTok, you must turn on the content disclosure setting. This ensures that you’re transparent about the type of content you’re posting and helps build and maintain trust between the TikTok community and advertisers. It can also let people know if a commercial relationship exists between you and a brand, if applicable.

Content Disclosure Setting: The content disclosure setting allows you to add a disclosure to your post to clearly indicate that your content is commercial in nature. This includes content that promotes a brand, product, or service, whether you’re promoting your own business or posting branded content on behalf of a third party, such as a company.

Reasons for Non-Disclosure?

Non-disclosed sponsored content is posted covertly, concealing the influencers’ brand partnership.

Influencers may be tempted to post non-disclosed sponsored content due to the following reasons, despite mandates from social media platforms or regional laws.

Influencers receive payments from brands indirectly (e.g., free sample).

Influencers may perceive eWOM as forming part of organic posts, which are perceived as more authentic and credible, potentially leading to increased engagement with the post and the concealment of brand sponsorship.

Algorithmic (e.g., social media platforms’ preference for promoting organic content) and non-algorithmic challenges (e.g., lengthy disclosure process), along with shortcomings in platform guidelines and regional laws, could prompt influencers to post non-disclosed sponsored content .

Google Ads

“Sensitive Events” Policy: Google enforces a “Sensitive Events” policy that immediately blocks ads that try to profit from natural disasters, wars, or public health emergencies (e.g., price gouging, essential goods during crisis, and victim balming)

Enabling Dishonest Behavior: Google explicitly bans the promotion of products designed to mislead others under its “Enabling Dishonest Behavior” policy. This creates a global ban on hacking software, fake documents (IDs/Passports), and academic cheating services, acting as a moral arbiter where the law is silent.

Google Ads (2)

Misrepresentation & Clickbait: Google’s “Misrepresentation” policy bans “Clickbait ads” that use negative life events (death, bankruptcy, arrests) to induce fear or guilt. It also bans “Misleading Ad Design,” such as ads that mimic system warnings or have buttons that don’t work, enforcing a quality standard that laws do not touch.

Financial Services Verification (Pre-emptive Gatekeeping vs. Post-Hoc Punishment): Google effectively acts as a private regulator by requiring “Financial Services Verification.” In many countries, advertisers must demonstrate they are licensed by the relevant local authority (and pass a third-party check, often via G2) before they are allowed to show a single ad. This turns a reactive legal system into a proactive platform gatekeeper.

Dangerous Products (Global Safety Standards vs. Local Rights): Google imposes a supra-national safety standard via its “Dangerous Products or Services” policy. It bans the advertising of guns, ammunition, and explosive materials globally, regardless of whether the local law permits it. This illustrates a platform enforcing a global safety standard that overrides local permissive laws.

Google Ads (3)

Personalized Ad Targeting (Ethical “Creepiness” vs. Legal Data Use): Google imposes an ethical layer on top of legal consent via its “Personalized Advertising” policy. Even if a user consents to being tracked, advertisers are strictly banned from targeting ads based on “sensitive interest categories” like financial hardship, medical history, or negative emotional states. You cannot target an ad to “people interested in bankruptcy services” or “people searching for divorce lawyers,” even if you legally own that data.

Limited Ad Serving: Google operates on a “probationary” model for new advertisers. Under the “Limited Ad Serving” policy, new accounts (especially those with unclear brand relationships) have their impressions throttled solely because they are new. They must “earn” trust over time or undergo identity verification to unlock full reach. This reverses the burden of proof: you are treated as a potential risk until you prove you are safe.

Amazon

Make sure the ad:

- Has a clear, action-oriented CTA in sentence case (for onsite)

- Contains necessary disclosures in the ad and/or landing page

- Meets the creative bar – crisp, clean images; not overly cluttered; appealing to look at

- Clearly identifies the brand and product being advertised

- Has pricing and savings claims that are the same on the ad and landing page

Make sure the ad does not:

- Have text that is too small or otherwise illegible

- Contain overly bright or distracting colors

- Blend into the background – the ad’s background should either contrast with a white background or contain a 1-pixel non-white border

- Contain animations over 15 seconds long (for onsite)

- Exceed word count limits

- Set a campaign duration and budget.

- Select a campaign goal (drive page visits, grow brand impression share, or reserve share of voice). We use this to recommend bidding and targeting strategies later in the campaign setup process that align with your chosen goal.

- Choose an ad format to determine what shoppers see when they view your ad.

- Product collection: Showcase multiple products or promote existing Stores.

- Store spotlight: Drive traffic to your Store (it must have at least 4 pages, each with 1 or more unique products).

- Video: A video ad of a single product or brand that directs shoppers to a product detail page or your Store.

- Pick products or a Store to showcase in your ad.

- Decide how much to bid for this ad placement, and if you want to target certain keywords, products, or audiences (these options will vary depending on what - campaign goal and ad format you chose).

- Add your brand name, logo, a headline, and images to your ad. In this step, you can preview what your ad will look like to shoppers.

You can choose to use a cost per click (CPC) or cost per 1,000 viewable impressions (VCPM) payment structure.

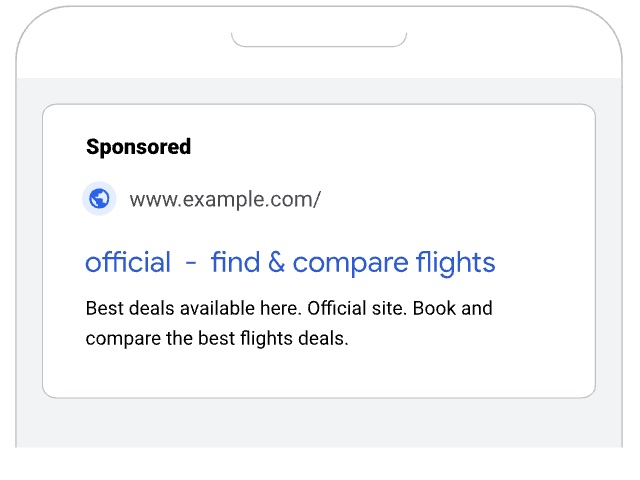

Rotten Tomatoes

An online content aggregator for reviewing movies and tv shows that serves as recommender.

Tomatometer: The Tomatometer score represents the percentage of

professional criticreviews that are positive for a given film or television show.Popcornmeter: The Popcornmeter, which captures audience sentiment, is represented by a popcorn bucket and indicates the percentage of fans who have rated a movie or TV show positively.

Rotten Tomatoes (2)

Ripe for a kicking: Hollywood’s love-hate relationship with Rotten Tomatoes: Twenty years after its launch, the movie-review aggregator’s verdict is now seen as vital to a film’s success or failure. Is the site too influential for its own good? When movies bomb the studios have been quick to blame Rotten Tomatoes

Martin Scorsese on Rotten Tomatoes, Box Office Obsession and Why ‘Mother!’ Was Misjudged: The legendary director is critical of the outsize influence of Tomatometer ratings and Cinemascore grades, adding that “good films by real filmmakers aren’t made to be decoded, consumed or instantly comprehended.”

Rotten Tomatoes Is Cooked — Why Fans Don’t Buy the Hype Anymore: Studios have been gaming Rotten Tomatoes for years. From hand-picking critic screenings to embargo shenanigans and “critic curation” (yep, that’s a thing), studios know how to tilt the score in their favor.

What about AI-generated Content?

Google: AI Disclosure in Political Ads (from Google) mandatorily requires verified election advertisers to prominently disclose if their ads contain “synthetic content” that depicts real people or events inauthentically. If a campaign uses AI to make a candidate say something they didn’t, they must check a box to generate a “Generated by AI” label, or the ad is blocked.

Meta: Labeling AI-Generated Content and Manipulated Media from Meta requires adding “AI info” (tag or label containing ““Made with AI.” Earlier it was “Imagined with AI.”) labels to a wider range of video, audio and image content when we detect industry standard AI image indicators or when people disclose that they’re uploading AI-generated content.

TikTok: Creators can disclose content as AI-generated directly on the post by adding text, a hashtag sticker, or context in the post’s description.TikTok also offers labels to let viewers know when content was made using AI: Creator label and Auto label.

Tools

Meta Blueprint: Discover online learning courses, training programmes and certification that can help you get the most out of Meta technologies.

Amazon Ads Academy: Elevate your advertising skills with our educational resources and trainings designed to help you learn how to use Amazon Ads. Explore courses, certifications, videos, and more that provide the insights and strategies you need to meet your business goals.

TikTok Academy: The official TikTok learning destination for marketers and agencies.

Master Google Tools: Learn and get certified for Google Ads, Google Analytics, Google Market ing Platform, Google My Business, and more.

Reflect

Discussion Point: When a government bans something, you can break the law and face a judge later. When a platform (like Google or Meta or TikTok) bans something (like an unverified political ad), the code literally prevents the ‘publish’ button from working. Is this absolute enforcement better for safety, or dangerous for freedom?

- For safety, scale and speed we need platforms to regulate.

- The internet is too fast and dangerous for slow courts. We need walls, not signs.

- For Freedom, Nuance, & Accountability we need human judges.

- Code lacks the human judgment required for justice. It creates a dictatorship without appeal.

Think about these scenarios critically:

The “Ambulance” Scenario: If we put a digital speed governor on every car to stop speeding (Code Law), we would save thousands of lives. But if your family member is dying in the back seat, the car still won’t let you drive fast to the hospital. Is that a trade-off you accept?

The “Shadow Ban”: If the government bans a book, it’s a public scandal. If an algorithm downranks a video so nobody sees it, nobody knows it happened. Which is more dangerous: visible censorship or invisible censorship?

Email Marketing, Ethics, and Regulation

Spam

Spams are annoying, repetive, and unsolicited messages sent over email or other electronic communication systems.

Legal Considerations

CAN-SPAM Act (US): A law that sets the rules for commercial email, establishes requirements for commercial messages, gives recipients the right to have you stop emailing them, and spells out tough penalties for violations (FTC).

CAN-SPAM Act identifies three kinds of email marketing messages:

- Commercial content - that advertises or promotes a commercial product or service

- Transactional or relationship content - that facilitates an already agreed-upon transaction or updates a customer about an ongoing transaction

- Other content - which is neither commercial nor transactional or relationship.

If the message contains only commercial content, its primary purpose is commercial and it must comply with the requirements of CAN-SPAM Act.

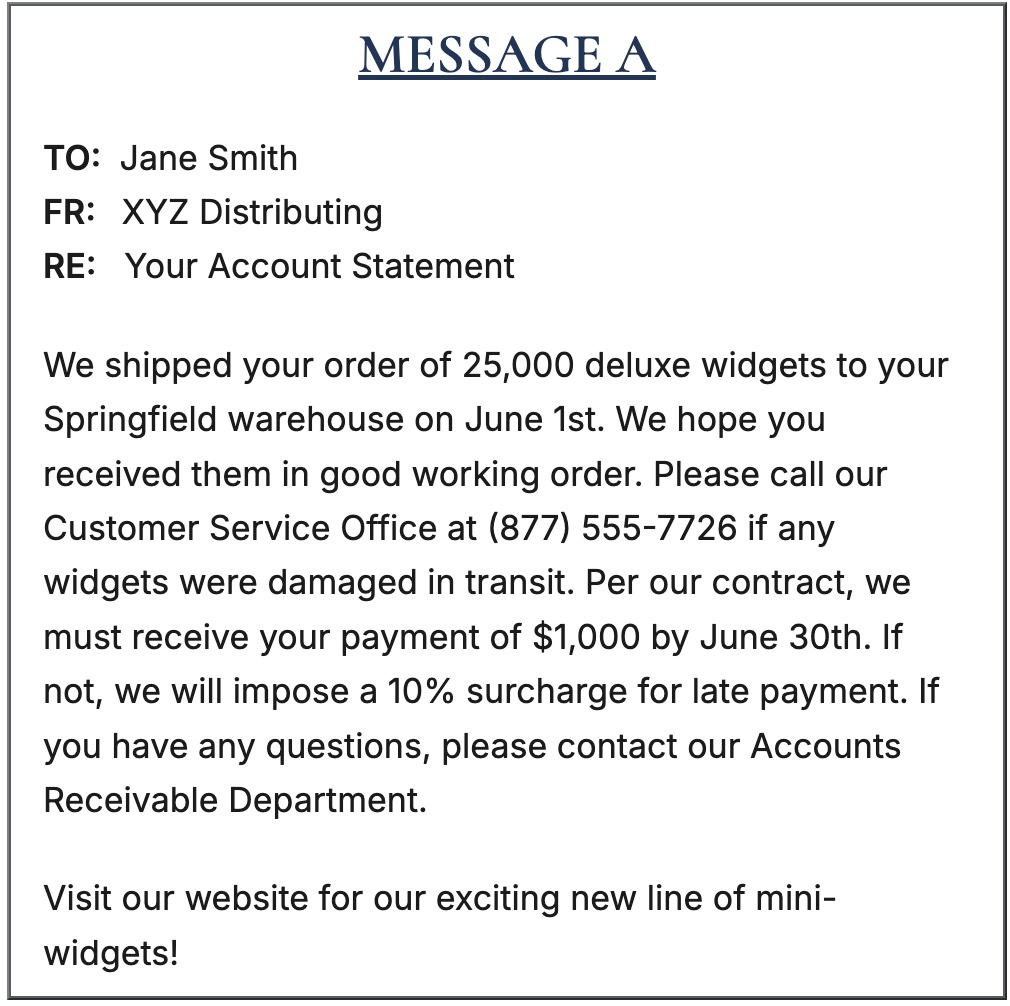

Example

MESSAGE A is most likely a transactional or relationship message subject only to CAN-SPAM’s requirement of truthful routing information. One important factor is that information about the customer’s account is at the beginning of the message and the brief commercial portion of the message is at the end.

MESSAGE B is most likely a commercial message subject to all CAN-SPAM’s requirements. Although the subject line is “Your Account Statement” – generally a sign of a transactional or relationship message – the information at the beginning of the message is commercial in nature and the brief transactional or relationship portion of the message is at the end.

Tools to Check Spam

- https://www.mail-tester.com/

- https://www.experte.com/spam-checker/isnotspam

- https://www.litmus.com/

- https://spamassassin.apache.org/

You can put your own email addresses in the place of the recipient of an email, and determine whether or not the email received may be interpreted as spam.

Candadian Anti-Spam Legislation

CASL is introduced to prevent spam, protect privacy, and ensure businesses responsibly use electronic communications (like email).

Key Provisions:

- Consent Requirement: Businesses must obtain explicit consent from recipients before sending commercial electronic messages (CEMs).

- Identification: CEMs must clearly identify the sender and provide contact information.

- Unsubscribe Mechanism: Every CEM must include a clear and easy way for recipients to unsubscribe from future messages. It should be a single-click.

- Record Keeping: Businesses must maintain records of consent and unsubscribe requests for at least three years.

Two types of consent

- Express Consent: Explicit permission given by the recipient (e.g., checking a box on a website).

- Implied Consent: Occurs in specific situations, such as existing business relationships or when the recipient has made an inquiry within the last six months.

Violation of CASL law can result in significant fines and penalties.

Reflect

Consider an email you recently received from a company for marketing purposes.

Use Mxtoolbox to check if the email’s domain is on blacklist.

Did the email comply with the CASL/CAN-Spam requirements?

Was there clear consent obtained before sending the email?

Did the email include proper identification of the sender and contact information?

Was there an easy-to-use unsubscribe mechanism provided?

Ethical Frameworks for Digital Marketing

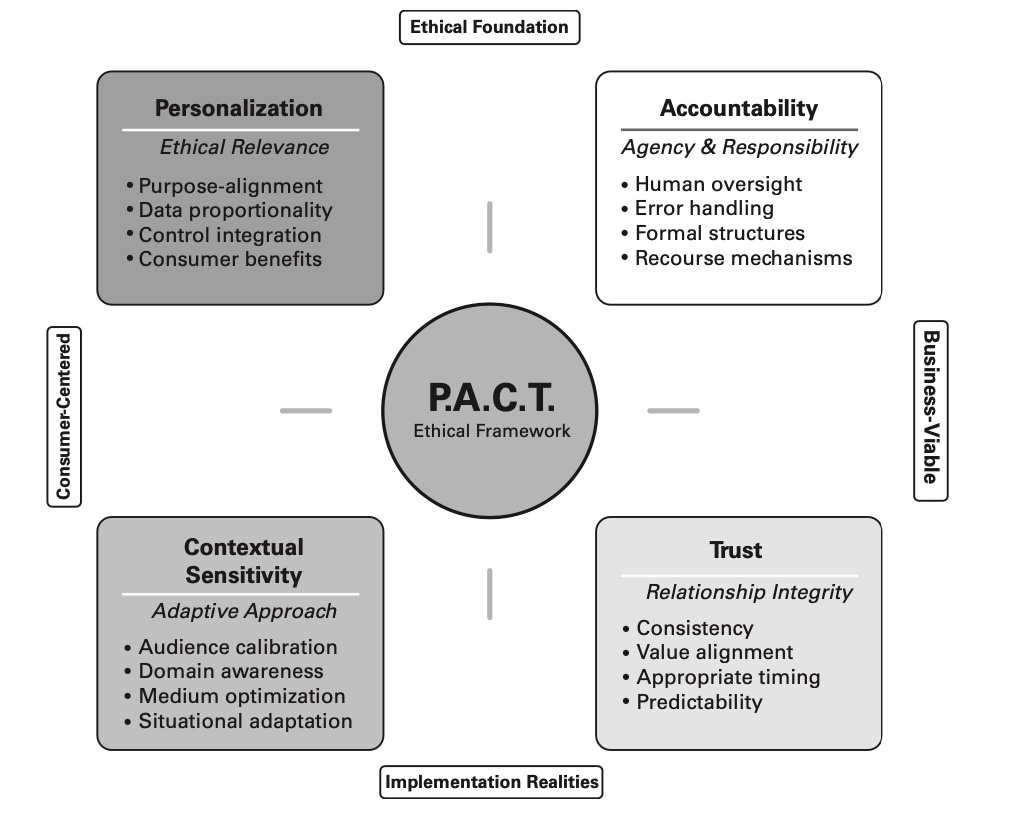

P.A.C.T Framework

Personalization: Balancing relevance with consumer privacy.

Accountability: Establishing governance for ethical compliance.

Contextual Sensitivity: Adapting cmapiangs to cultural and real-time events

Trust: Ensuring transparency to build consumer confidence.

PACT Framework focuses on creating marketing strategies that respect consumer privacy, maintain transparency, and foster long- term trust.

PACT in Action

Personalization with Purpose

A leading retail company adopted the P.A.C.T. Framework to enhance the personalization of its e-commerce platform. Rather than relying solely on purchase history for product recommendations, the company introduced a consent-driven personalization system where customers could select their values (such as sustainability, eco-friendliness, or diversity) to receive recommendations aligned with those principles. The result was a more engaged and satisfied customer base that felt in control of their shopping experience and appreciated the company’s commitment to aligning with their values.

Contextual Sensitivity in Global Campaigns

A multinational consumer goods company used contextual sensitivity to refine its global marketing campaigns. The company’s AI system analyzed real-time social media trends and localized data to adjust messaging in different regions, avoiding a one-size-fits-all approach. For example, during a sensitive period in one region, the company paused certain campaigns to avoid appearing insensitive and instead focused on culturally relevant content. This adaptive approach resulted in positive consumer feedback and greater brand loyalty.

Accountability in AI-Drinven Marketing

A global tech firm struggled to align its AI-powered ad platform with GDPR requirements. By implementing P.A.C.T.’s accountability pillar, they introduced adaptive monitoring tools and AI audits. This ensured ads were targeted fairly while maintaining consumer privacy, avoiding €20M in potential fines. By adopting the P.A.C.T. Framework and implementing adaptive governance structures, the company set up a system of real-time monitoring and regular AI audits. The internal AI ethics committee identified potential biases in how ads were being targeted to different demographic groups and implemented corrective measures to ensure fairness, increasing trust in the platform and avoiding regulatory issues.

Trust Mesurement Framework

Traditional vs. Digital Marketing

Traditional marketing builds trust through brand familiarity and consistent experiences, building trust in digital marketing involves additional psychological dimensions related to automation, decision-making autonomy, and perceived control.

Trust in AI is multi-dimensional, and it’s shaped by how people

feel,think, andbehavein real-time when interacting with automated systems. Traditional metrics likeNPSandCSAT(Customer Satisfaction Score) tell you if a customer is satisfied — but not why they trust (or don’t trust) your digital automation systems.Three dimensions of Trust

- Behavioral Trust: what customers behave

- Emotional Trust: What customers think.

- Cognitive Trust: What customers feel.

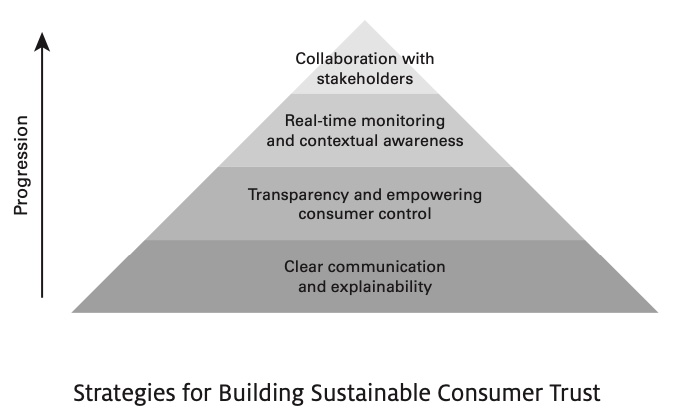

Strategies to Build Trust

- Behavioral Trust: When customers engage frequently, opt in to data sharing, or return to your digital tools repeatedly, that’s a sign of behavioral trust.

- Repeat engagement with automated tools (e.g., product recommendation, chatbots, email marketing)

- Emotional Trust: The

humanizationof digital tools (e.g., voice of assistant, the empathy in a chatbot’s reply, or how “human” a recommendation feels) all play into consumers’ emotional trust.- Positive emotional responses to AI interactions (e.g., feeling understood by a chatbot)

- Cognitive Trust: When your digital tools explains itself clearly — or when customers understand what it can and can’t do — they’re more likely to trust the output.

- Clear understanding of AI decision-making processes (e.g., transparency in how recommendations are generated)

Sustainable Consumer Trust

Reflection

Consider the Trust Measurement Framework.

How might you integrate three dimensions of consumer trust into your organization’s marketing analytics practices?

- behavioral trust

- emotional trust

- cognitive trust

Staying ahead of emerging trust challenges is essential for brands seeking to establish long-term, sustainable relationships with consumers in a digital marketing environment. Failure to address these issues proactively can erode trust and damage brand reputation.

Thank You!

🙏

Q&A